Half the people logging into patient portals aren’t patients. They’re caregivers, and the AI being built on top of those systems doesn’t know that.

In March 2026 I attended a Microsoft Copilot Health demo and a patient-centered AI panel hosted by the National Health Council. The technology was impressive. The conversation was thoughtful. And the caregiver — the person managing someone else’s health, signing forms under pressure, and navigating systems that were never built for them…

… was invisible.

This article reflects what I think needs to change before we build the next layer of health AI on the same blind spot.

Contents

The Demo

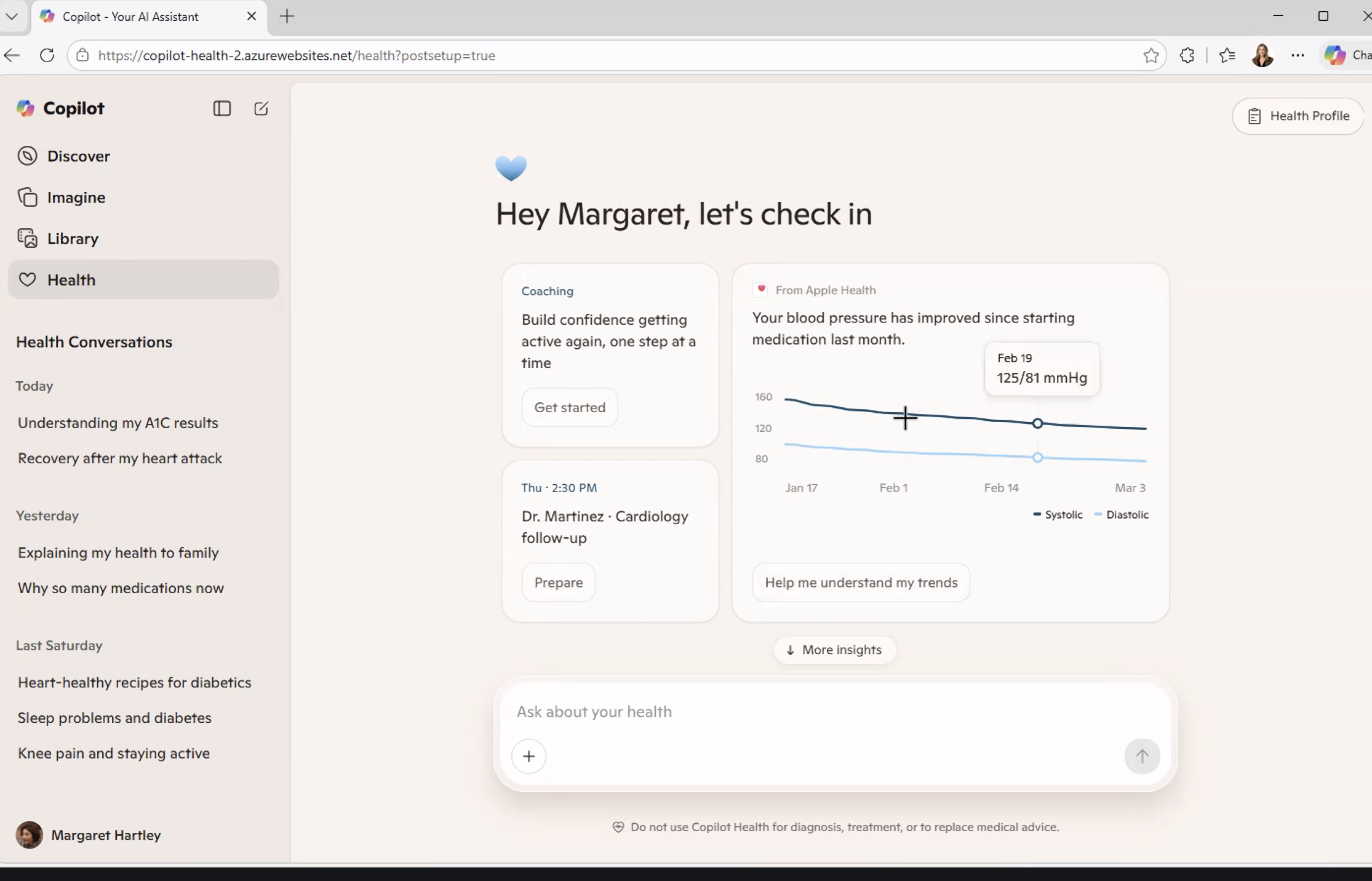

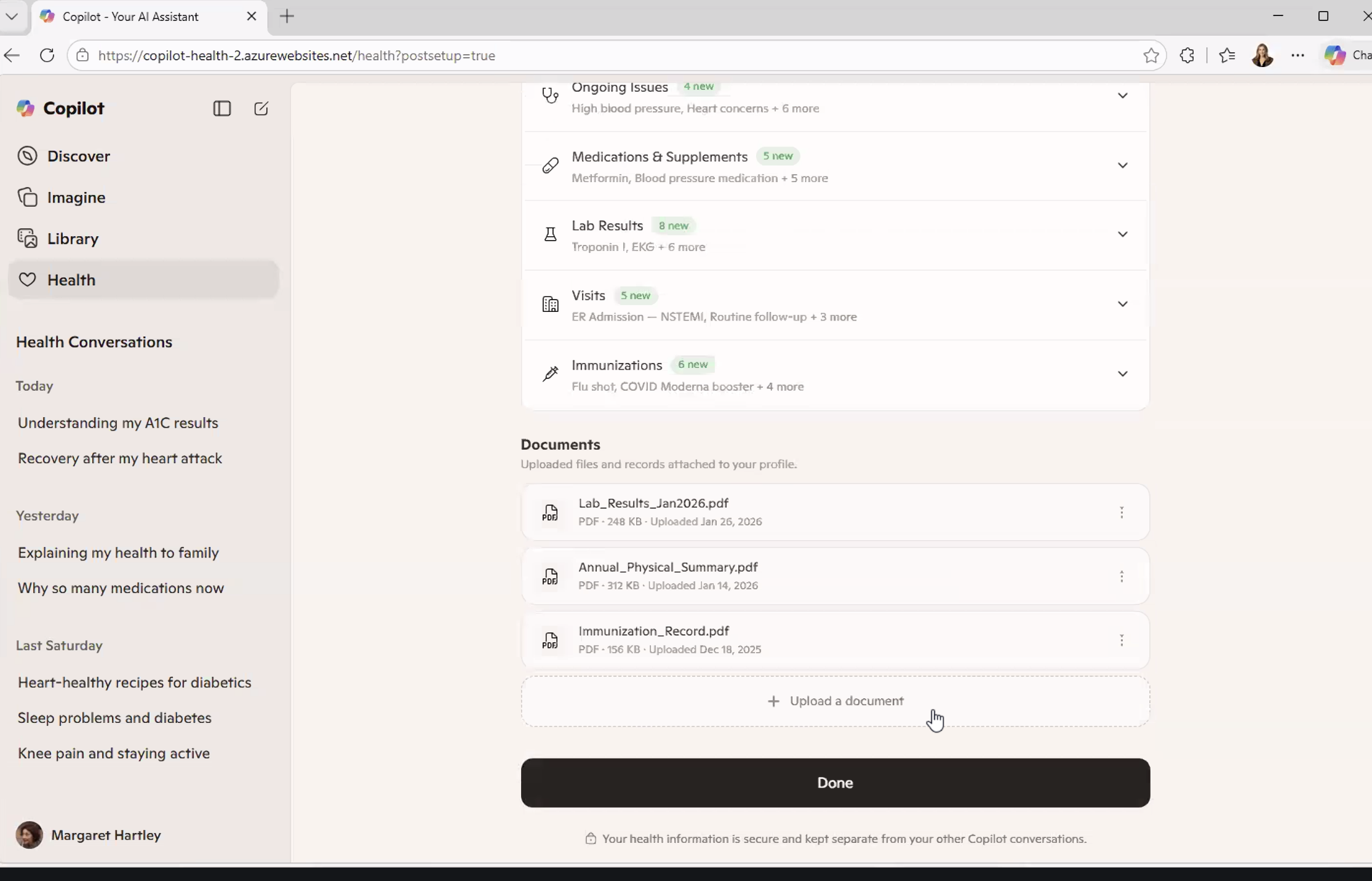

Copilot Health is a consumer-facing AI health companion that pulls from multiple data sources simultaneously with:

- Medical records from connected providers

- Lab results

- Wearable data from Apple Health or an Oura ring

- Previous health conversations from within the Copilot ecosystem

- Uploaded documents

It’s all synthesized into a single, conversational interface.

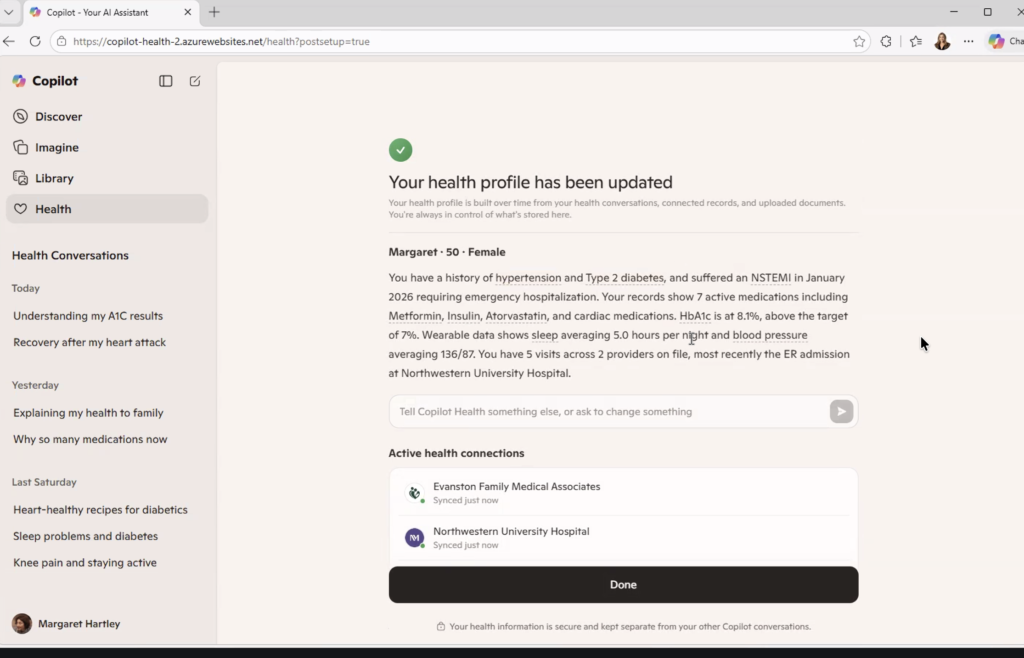

The demo persona was “Margaret.” She’s 50 years old with a history of hypertension, Type 2 diabetes, high cholesterol, and a recent heart attack (an NSTEMI requiring emergency hospitalization in January 2026). Her health profile showed 7 active medications, an HbA1c of 8.1% against a target of 7%, a resting blood pressure averaging 136/87, and sleep averaging 5 hours a night from her wearable data.

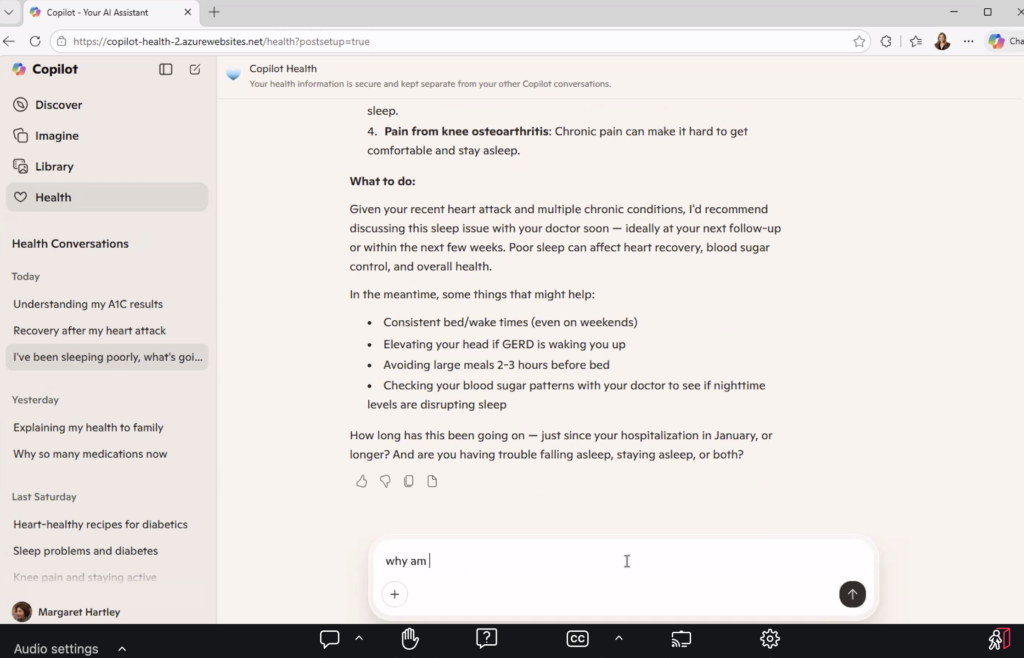

She asked why she was taking metoprolol, which is common after a serious cardiac event when discharge summaries are hard to process in real time. The system explained the medication, what it does, and invited follow-up questions about side effects.

When she entered in the Copilot that she’d woken up with severe jaw pain, Copilot flagged it as a potential cardiac symptom and recommended calling 911 immediately.

That’s not a trivial capability. For someone managing multiple chronic conditions, trying to understand why they’re on seven medications, wondering whether a symptom is serious, this tool offers something the healthcare system rarely does: an answer, in plain language, right now.

Rachel Gruner led the demo for Microsoft, and described the goal clearly: bring everything together, make it usable, help people navigate care. She named the 3 a.m. moment explicitly, being someone searching for health answers because they can’t reach a doctor. Someone who needs information and has nowhere else to turn.

She was describing a patient. But she was also unknowingly describing a caregiver.

The Number Nobody Mentioned

Here is a statistic that did not come up once during the demo, nor during the hour-long panel that followed it.

According to the Health Information National Trends Survey (federal data collected by the National Cancer Institute), the number of people logging into a patient portal on behalf of someone else more than doubled between 2020 and 2024. It went from 24% to 51%.

Half the people navigating these systems aren’t patients. They’re caregivers, like:

- A daughter checking her mother’s lab results after a cancer scare.

- A husband refilling his wife’s prescriptions while she recovers from surgery.

- An adult child scheduling a follow-up for a parent who doesn’t speak English.

- A spouse who has memorized every medication, every specialist, every prior authorization number (because if they don’t, no one will)

They’re exhausted, scared, and running someone else’s health on top of everything going on in their own life. And they are doing it inside systems that were never built to recognize them.

Most patient portals still don’t have a proper caregiver login. The formal proxy access process, where it exists, is often so confusing or slow that caregivers just use the patient’s credentials instead. So there’s no:

- Audit trail

- Role separation

- Way for the system to know who’s logged in asking questions, making decisions, or interpreting results

Copilot Health connects to health records, wearables, and labs. It builds a profile over time based on conversations and data. It learns.

But what is it learning from? And about whom?

If half the behavioral data flowing through these systems is caregiver behavior that the system is reading as patient behavior (usage patterns, questions asked, drop-off points, topics searched at all hours of the night), then the AI being trained on that data has a foundational problem.

Patient-centered AI built on a misread of who the patient actually is isn’t patient-centered. It’s just a more confident version of the same blind spot.

What the AI Actually Learns

Maya Friedman, Director of Product Design and UX at Tidepool, made an observation about Copilot Health that she shared during a CES session in January 2026. She noted that the system synthesizes data across multiple sources to provide guidance. And in doing so, it creates a layer of coherence on top of information that was never designed to fit together.

Health records, wearables, and labs all operate on different standards, different levels of reliability, and different contexts.

Copilot doesn’t fix that fragmentation. It builds a coherent surface on top of it.

That coherence is genuinely useful for the person searching for answers at midnight. It’s also the source of risk.

A confident answer that’s built on misidentified behavior is harder to question than an incomplete answer. Think of the person on the other end of that conversation. A caregiver who has learned medical terminology not in school but out of necessity, who is tired and has 17 other things to manage, is not going to interrogate the data sources behind the insight. They’re going to act on it.

There’s a compounding problem that goes beyond accuracy. As these tools learn over time, they build profiles with personalization. They adapt to the user.

But if the system thinks the user it’s serving is Margaret, while the actual user is Margaret’s daughter navigating her mother’s post-cardiac recovery from three states away, then the personalization is wrong from the first conversation. And it compounds with every interaction.

The AI is getting better and better at serving the wrong person.

This isn’t an argument against tools like Copilot Health. It’s that the founders who build these tools should be precise about who they’re actually serving and build it accordingly. The 3 a.m. user isn’t always the patient. Sometimes she’s the person who can’t sleep because she’s worried about someone she loves and doesn’t know who else to ask.

She deserves a system that knows she’s there.

Ownership vs. Control

David Jost, Chief Technology Innovations Officer at the Epilepsy Foundation, said something during the panel that I haven’t been able to stop thinking about.

“Ownership and control are not the same thing.”

He was making a technical point about data governance — about the difference between having rights to your data and having actual agency over how it moves, who sees it, and what it’s used for.

But as a former family caregiver, I heard it as something more personal.

I owned my husband’s story. I was in every appointment. I tracked every medication change, every lab result, every specialist referral across multiple chronic conditions (diabetes, kidney failure, cancer, and limb loss). I knew his conditions better than most of the providers treating him.

But I didn’t control what happened to his data.

When we signed intake forms — and we signed a lot of them — we did it because we needed to get into the room. Steve Winawer, Head of Data, Digital, and Technology at Takeda described this dynamic plainly during the panel: “You walk into a doctor’s office. You sign the forms because what you want to do is see the doctor. You don’t really read them.”

That consent is what the entire data ecosystem is built on.

Not informed consent in the full sense of the phrase.

Transactional consent. The kind you give because the alternative is not being seen.

For caregivers, this is even more layered. You’re not just consenting on your own behalf. You’re consenting or not, because often there’s no mechanism to do so separately on behalf of someone else. Someone who may not be able to read the form themselves. Someone whose data is being collected, moved, and used in ways neither of you will ever fully trace.

Owning your health data and controlling it are two different things. Most of us have the first. Almost none of us have the second.

As AI health tools expand to connect more records, pull more data and build richer profiles, the gap between ownership and control will widen.

And caregivers, who have always been the system’s most active unpaid navigators, will feel that gap the most.

The Longer History

At the end of the panel, I asked about Henrietta Lacks.

For those unfamiliar: Henrietta Lacks was a Black woman whose cancer cells were taken during a medical procedure in 1951, without her knowledge or consent. Those cells, known as HeLa cells, became one of the most important biological tools in modern medicine. They contributed to the polio vaccine, to cancer research, to countless pharmaceutical breakthroughs. The medical system built billions of dollars of value on her biology.

Her family only found out decades later.

I asked the panel: as AI systems become better at attributing value to data — tracking whose information contributed to which insight, which drug, which discovery — what can we learn from Henrietta Lacks about making sure that value flows back to the people it came from?

Heather Flannery, Founder and CEO of AI MINDSystems Foundation, gave the only answer, and it was direct.

She said that the same technologies being developed for computational governance and democratic-scale consent (making it possible to track and trace how data moves through a system) can also administer value attribution. Verifiably, continuously, and at scale.

Contributions of training data, lived experience, insight, problem-framing and caregiving itself are all valuable. None of it is currently tracked, traced, or remunerated.

The same infrastructure that fixes consent, Heather said, can fix attribution.

Henrietta Lacks didn’t consent. Caregivers consent constantly to forms they don’t read, in moments when they have no other choice, on behalf of people who are too sick or too scared to read them either.

The extraction looks different, but the pattern is the same.

The history of health data in America is, in part, a history of taking value from people who were never designed to benefit from the systems they fed. That history didn’t end in 1951. It continues every time a caregiver logs into a portal, answers a chatbot’s questions, uploads a discharge summary, and walks away having contributed data to a system that will use it to build something she will never own and cannot control.

National Minority Health Month exists to name these patterns. The question for this moment in health AI is whether we’re going to repeat them, or build something different.

What Caregivers Should Ask For

This is not an argument against AI in healthcare. The Copilot Health demo showed real capability. The panel included people genuinely committed to getting this right. The conversation about data sovereignty, computational governance, and equitable AI is happening — slowly, imperfectly, but sincerely.

This is an argument for specificity.

Patient-centered design that ignores the caregiver isn’t patient-centered. It’s incomplete. And the window for building these systems correctly is now, before:

- the behavioral data compounds

- the profiles deepen

- the coherence layer becomes too established to question

So here’s what caregivers should be asking for, from the tools being built, from the organizations building them, and from the policymakers shaping the rules:

- A login that knows who you are. Not a workaround. Not a borrowed password. A formal caregiver access model that distinguishes your behavior from the patient’s, maintains an audit trail, and allows the AI to serve you based on your actual role.

- Proxy access that takes minutes, not phone calls. The formal process exists in some systems. It is almost universally too slow, too confusing, and too rarely completed. If half your users are caregivers, that’s not an edge case for product teams to accommodate. That’s their primary use case.

- Consent that means something. Not a form signed under duress. A clear, plain-language explanation of what data is being collected, how it will be used, and what the caregiver retains the right to revoke. Separately from the patient’s consent, because the caregiver is a separate user with separate stakes.

- AI trained on who’s actually in the room. If the behavioral data flowing through these systems includes caregiver behavior, the models need to know that. Not to exclude it — to interpret it correctly. The questions a caregiver asks at 3 a.m. are different from the questions a patient asks. The guidance each one needs is different too.

- Recognition that caregiving is a data contribution. The labor of coordinating care, navigating systems, tracking medications, interpreting results, and advocating in clinical settings generates information that health AI is being built on. That contribution deserves to be visible, and eventually, as the infrastructure Heather described matures, remunerated.

If you’re a caregiver navigating any of this, my newsletter Care Without Compromise goes deeper on the practical and systemic dimensions of what it means to manage someone else’s health in a system that wasn’t built for either of you.

The tools are getting smarter. Let’s make sure they’re learning about the right person.

Sources

Health Information National Trends Survey (HINTS) 2024, National Cancer Institute.

PXI Q1 Convening: Building the Patient-Centered AI Ecosystem, National Health Council, March 26, 2026. Microsoft Copilot Health demo presented by Rachel Gruner. Panel quotes from David Jost (Epilepsy Foundation), Heather Flannery (AI MINDSystems Foundation), Steve Winawer (Takeda), Ian Miller (Digital Medicine Society); moderated by Rene Quashie (Consumer Technology Association).